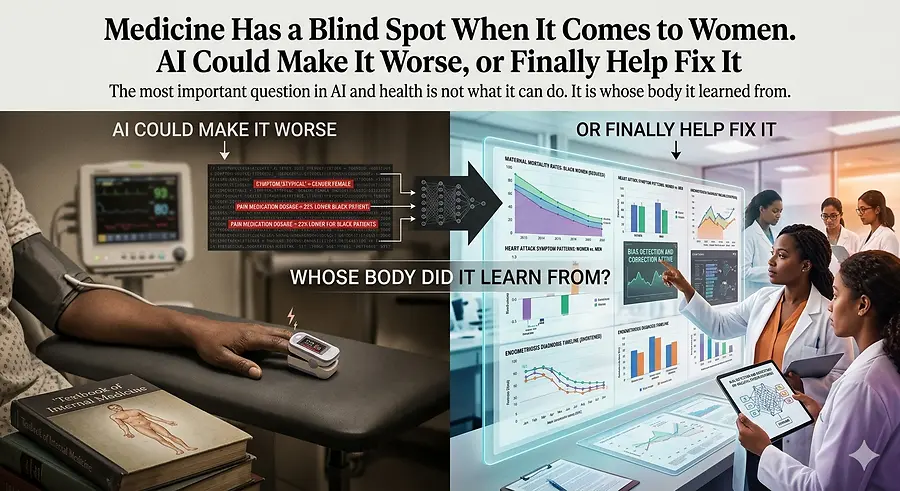

Medicine Has a Blind Spot When It Comes to Women. AI Could Make It Worse, or Finally Help Fix It.

How AI Is Inheriting Medicine's Blind Spots—And What We Can Do About It

There is a conversation happening right now about AI in healthcare, and I think it is missing the most important question:

Whose body did it learn from?

I have spent the better part of the last year coaching people through their fear of AI. I have written about it, built tools with it, and walked into rooms with it to see what happens when access to preparation is no longer something you have to inherit.

I am a believer.

But I also come from a family of medical professionals. My stepmother and sister-in-law have spent their careers inside the very system this technology is now being trained on. I learn from them constantly — about what gets written in a chart and what does not, about which symptoms get believed and which are quietly filed under “anxiety,” and about how often Black women feel the need to bring a witness to appointments just to ensure their pain is taken seriously.

That is the system we are now feeding into these models.

So when I hear people talk about AI changing healthcare forever, I want to ask the question almost no one is asking:

Whose body did it learn from?

For most of the history of modern medicine, the answer was men — specifically white men. Until the late 1970s, FDA rules kept most women who could become pregnant out of early clinical trials. The intention was to protect them, but the result was decades of drug dosages, test benchmarks, and definitions of “normal” being built around bodies that did not represent most people.

The NIH passed legislation in 1993 intended to address this issue. Yet more than thirty years later, women still make up only about 29 to 34 percent of participants in early-stage drug studies. The rules requiring data to be reported by sex exist, but enforcement remains inconsistent.

This is what I keep coming back to:

It is not that medicine lacks data on women.

The data exists.

The rules exist.

What has been missing is accountability.

The blind spot is built into the system itself.

And that blind spot is not theoretical. It shows up in real bodies and real outcomes.

In 2023, Black women in the United States died from pregnancy-related causes at a rate of approximately 50 deaths per 100,000 live births. For white women, the rate was about 14. That is more than three times higher. The disparity actually widened that year, even as the overall maternal mortality rate declined. The most recent CDC data shows the gap remains in 2024.

This is not history.

This is happening right now.

Women who arrive at hospitals experiencing heart attacks are about 50 percent more likely than men to receive an incorrect initial diagnosis. When a heart attack is missed, the risk of death within 30 days increases by approximately 70 percent.

The reason is not mysterious.

The diagnostic standards used to identify heart attacks were largely built around how men experience them. Women’s symptoms are often labeled “atypical.” That single word does a tremendous amount of quiet damage. What it really means is:

The textbook was not written for you.

Endometriosis takes an average of six to seven years to diagnose worldwide. Some women in the United Kingdom have waited as long as 27 years. For Black and Hispanic women, the wait is often roughly twice as long as the average.

Part of that disparity is linked to access to quality healthcare.

Part of it comes from the long-standing and false belief that endometriosis is primarily a “white woman’s disease.”

Part of it stems from harmful assumptions that Black women experience pain differently or can tolerate more of it.

Recent national clinical guidelines have now explicitly identified and condemned these beliefs.

And these assumptions are not relics of the distant past.

A 2016 study found that 40 percent of first- and second-year medical students believed Black people’s skin is thicker than white people’s skin. A review covering two decades of pain-management studies found that Black patients were 22 percent less likely than white patients to receive any pain medication at all.

Then there is the pulse oximeter — the small device clipped onto a patient’s finger to measure oxygen levels.

The same device nearly every COVID patient encountered during the pandemic.

That technology was designed and tested primarily on lighter skin tones.

A 2020 study published in The New England Journal of Medicine found that Black patients were nearly three times more likely to experience “hidden hypoxia,” meaning the device displayed normal oxygen readings while blood tests revealed dangerously low oxygen levels.

A 2023 follow-up study involving more than 24,000 hospitalized patients found that these inaccurate readings were more likely to obscure the need for COVID treatment in Black patients.

During a global pandemic, major healthcare decisions were made based on flawed data.

These are not isolated problems at the margins of healthcare.

They are foundational problems.

Now think about what happens when AI is trained on top of all of it.

AI in healthcare does not emerge from nowhere.

It learns from medical charts.

It learns from scans.

It learns from prescription histories.

It learns from clinical trials.

If those charts were written within systems where Black women’s pain was minimized, if the scans came primarily from white and male patients, and if the prescription records already reflect racial disparities in treatment, then AI will not automatically correct those inequities.

It will scale them.

A model trained primarily on heart-attack data from men will not suddenly become highly effective at identifying women’s symptoms.

A symptom checker trained on outdated research will not suddenly recognize endometriosis instead of recommending another prescription for anxiety medication.

A risk-scoring system trained on biased outcomes will reproduce those same patterns — only faster and at a much larger scale.

I do not think enough people are talking about this.

AI is not a neutral tool being introduced into a neutral system.

It is a learning system being trained on structures that have never treated everyone fairly — especially Black women.

But here is what keeps me hopeful:

The same technology capable of amplifying these blind spots can also expose them.

AI can analyze decades of medical research and identify studies that excluded women or failed to separate outcomes by sex. No human team could realistically process that volume of information at the same speed.

It can identify symptom patterns hidden inside hospital records that clinicians may have overlooked for years.

It can become the second opinion many women — especially Black women — have historically struggled to access.

It can examine a chart full of “anxiety” labels and ask the question a doctor never did.

That is not science fiction.

That is a real and achievable use case.

But it will only happen if the people who understand these inequities are present while these systems are being built.

That is not simply an emotional argument.

It is a design problem.

What I continue telling women who feel uncertain about AI is this:

This is the moment.

Not to catch up.

To lead.

I meant it when I said it about business, and I mean it even more when it comes to healthcare.

Women have carried the cost of medicine’s blind spots for generations.

Now we face a choice:

We can allow AI to inherit and accelerate those blind spots, or we can become the people who step into the room — carrying the data, the lived experience, and the relationships with doctors, researchers, and patients who understand exactly what is broken.

And together, we can build something better.

The keys are already there.

Pick them up.